Miyya Cody

Resume

About Me

Designing Difficulty that Respects the Learner

Designing Difficulty that Respects the Learner

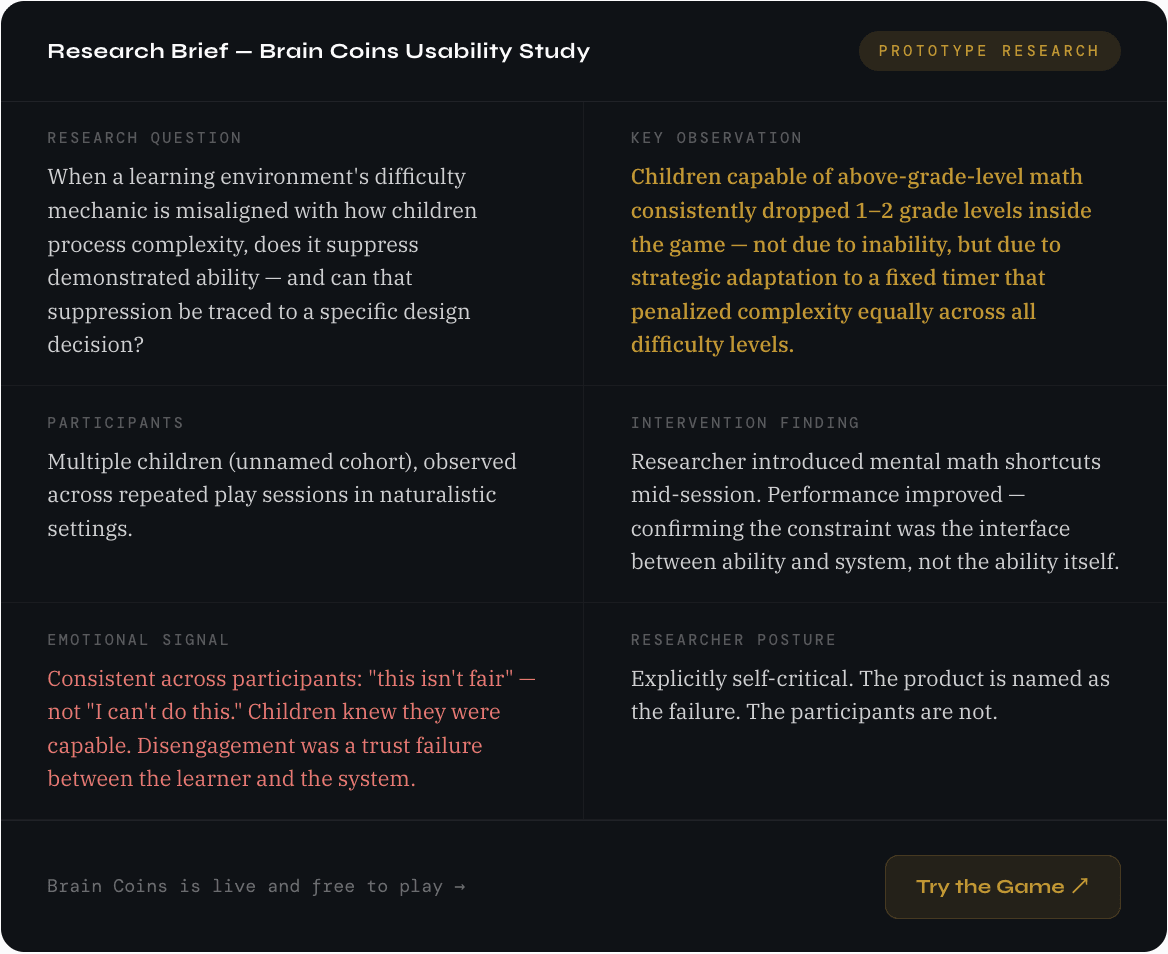

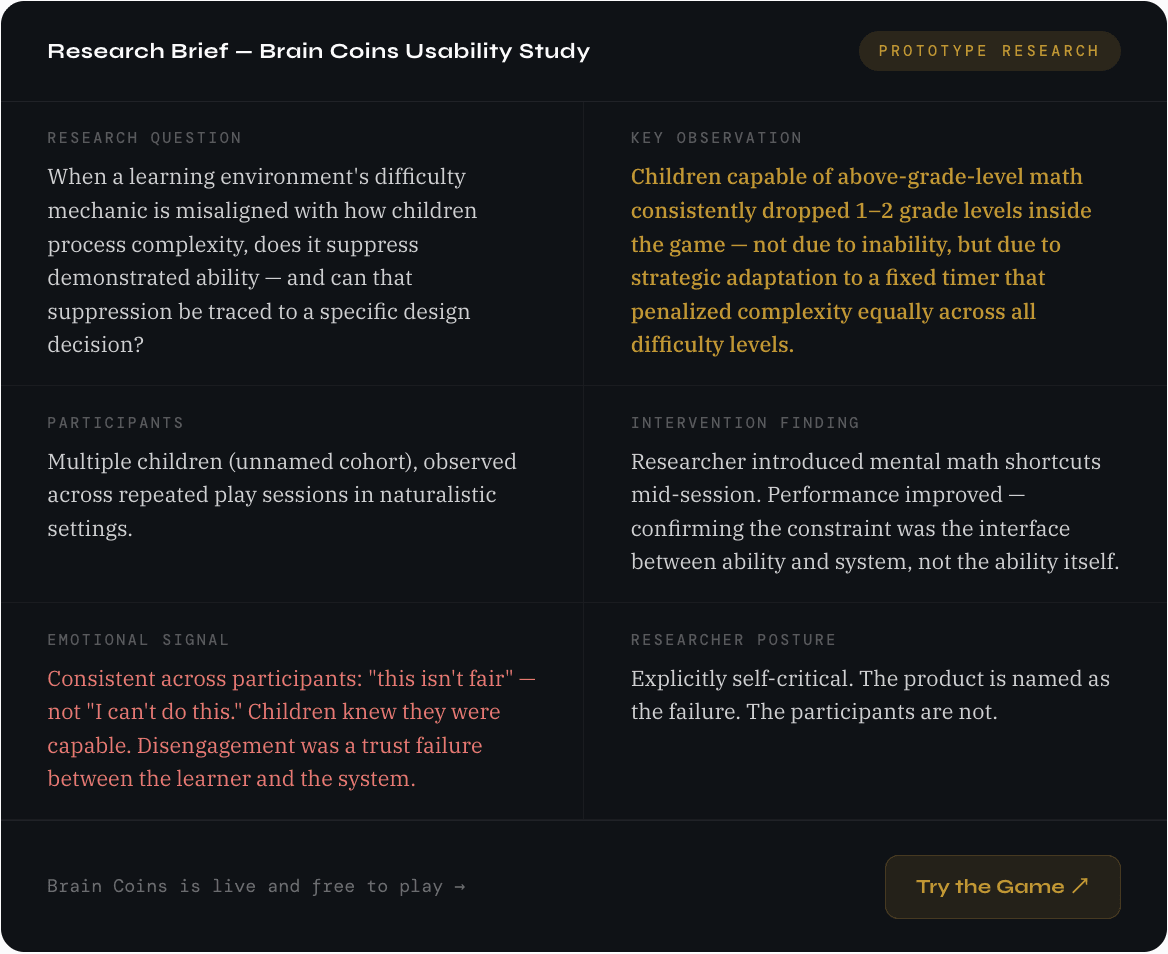

Name/Title | Method | Product |

|---|---|---|

Miyya Cody Full Stack Product Designer | Prototype usability testing, behavioral observation, in-session intervention | Brain Coins (researcher-built math game) · Cohort: multiple children, repeated sessions |

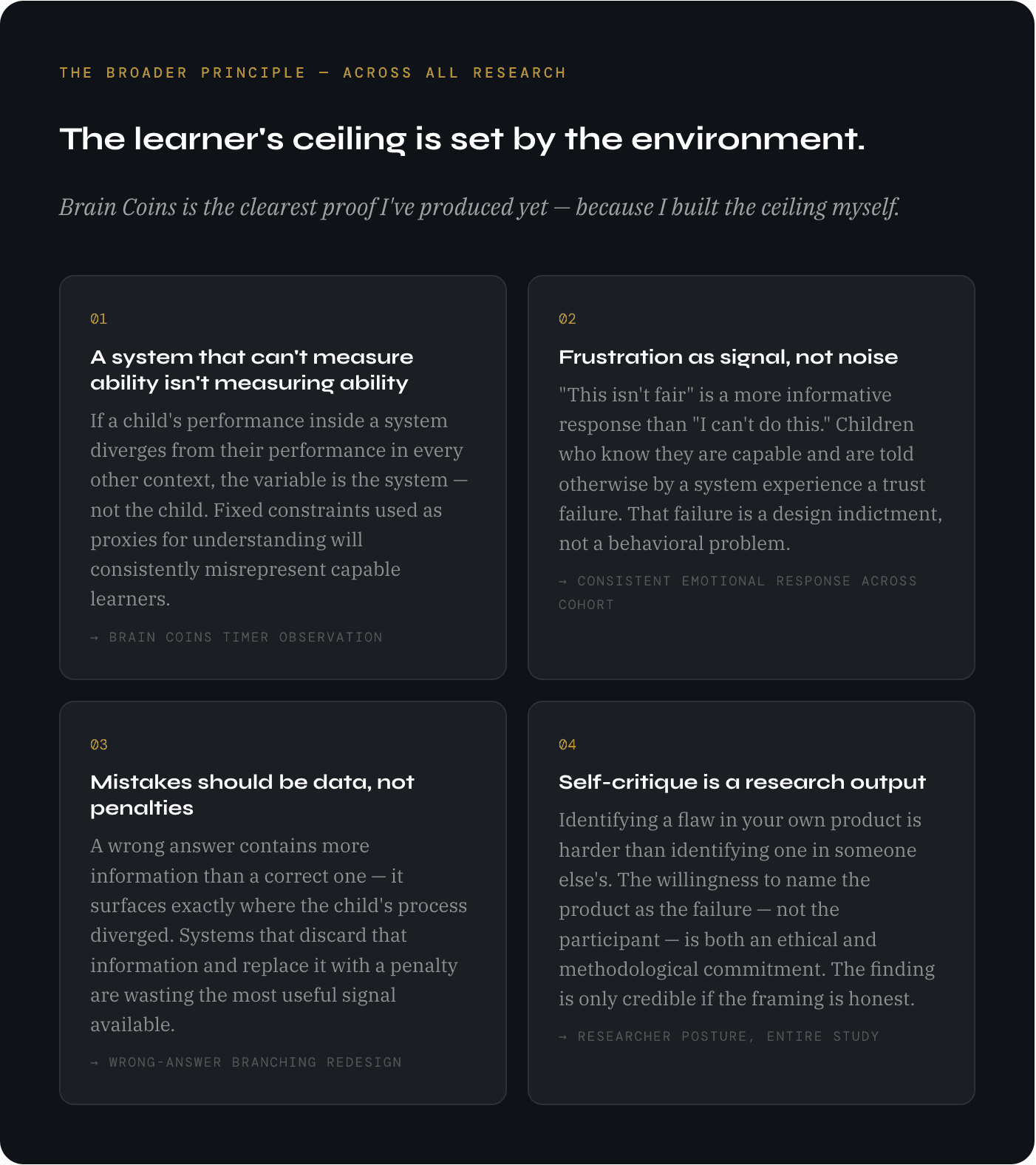

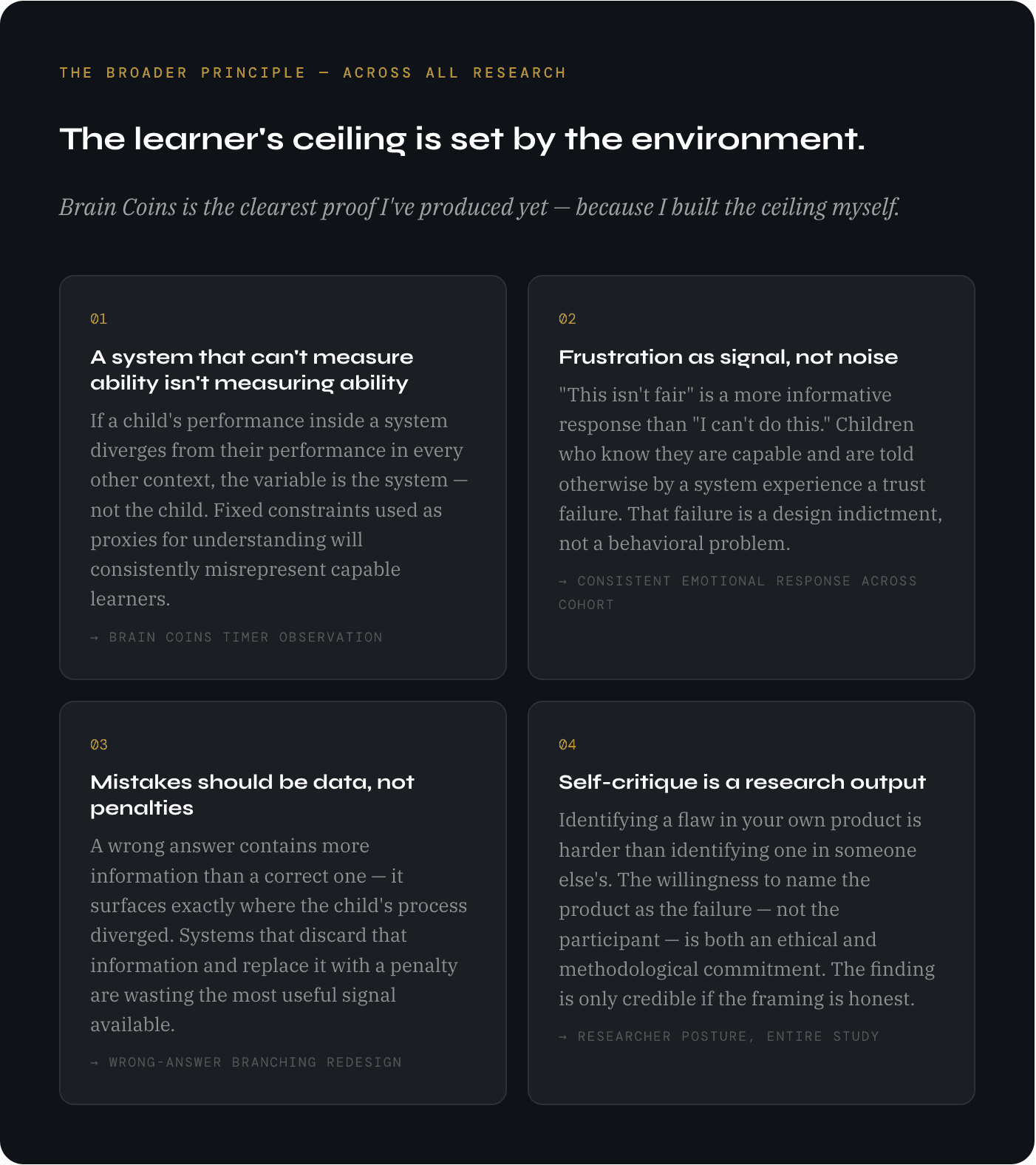

I built a math game for kids. Kids capable of above-grade-level math started playing it two grade levels below where they actually are. That's not a learner problem. I designed the flaw. Here's what I found when I looked at my own product honestly.

What I observed and why it matters

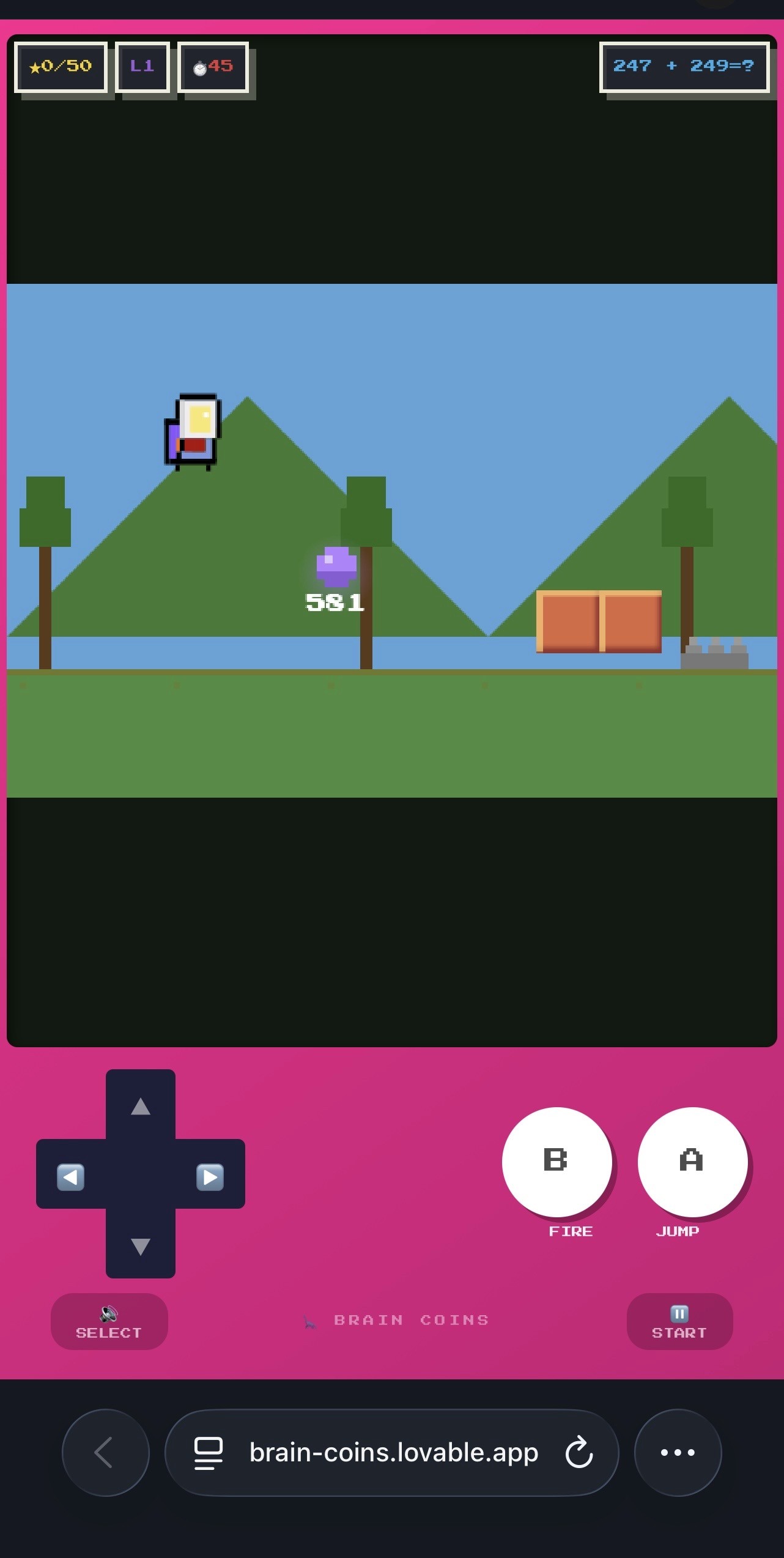

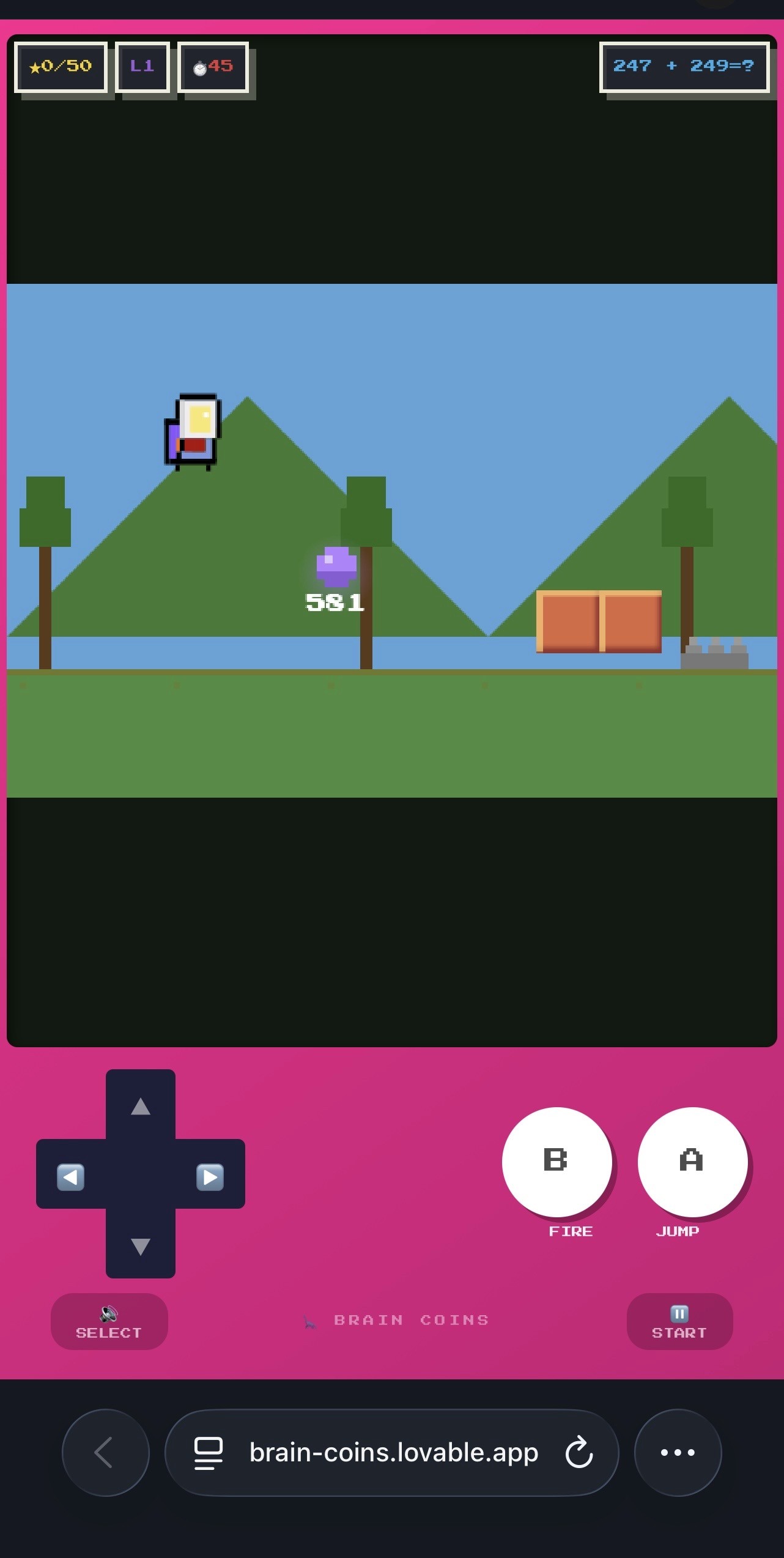

Brain Coins is an arcade-style math game I built: solve problems, earn coins, level up. Fast, pressured, immediately satisfying. In many ways it works. Kids like it. They come back to it.

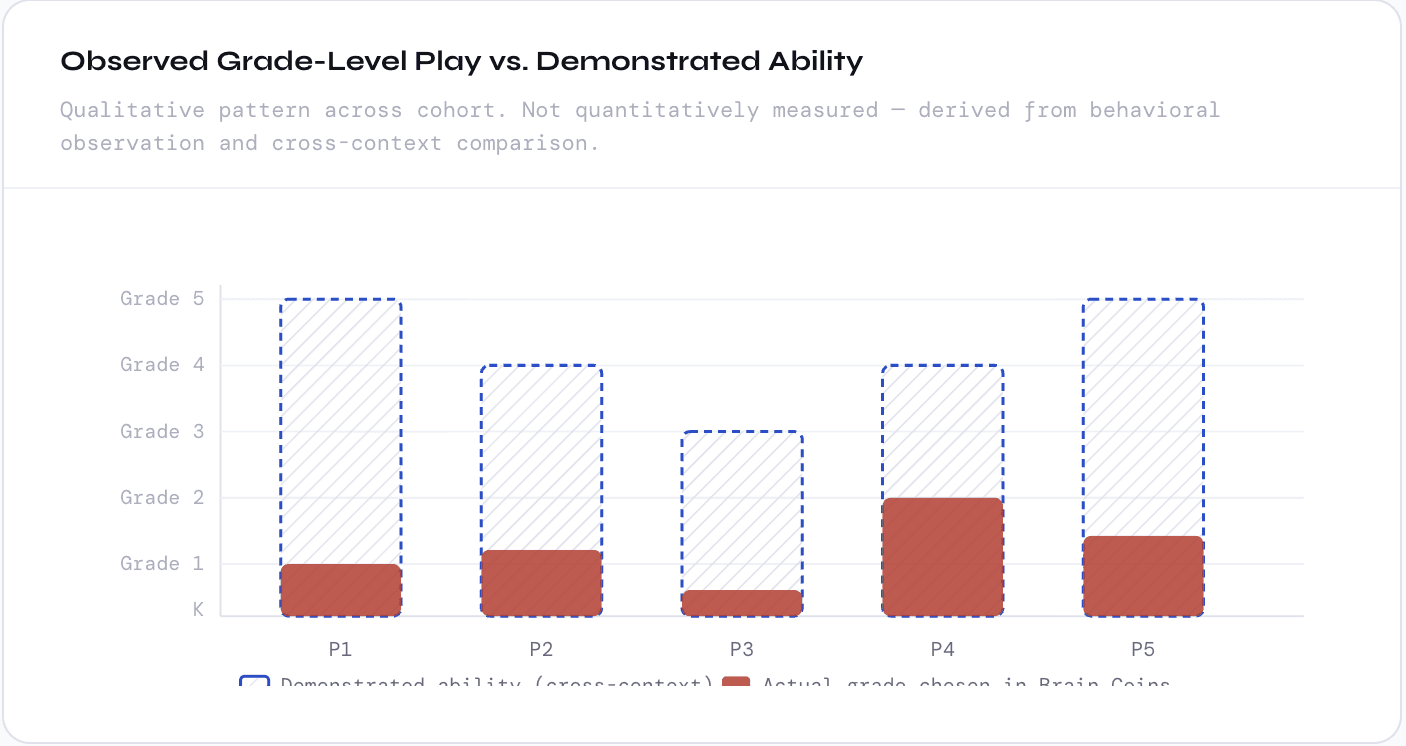

But several of the children I've been studying across this research — kids I've watched build math problems above their grade level for fun, solve multiplication on a playground without pausing, voluntarily extend problems by calculating comparative ratios — are playing Brain Coins at kindergarten level. First-grade level. One to two full years below where they demonstrably perform in every other context.

I spent time trying to explain this as a context effect, an age-appropriate preference for easy wins, a different kind of engagement. None of those explanations held. The pattern was too consistent and the emotional signal too specific.

Self-Critique

The correct framing, once I was honest about it: I built a game that replicates one of the worst patterns of traditional education. I used a fixed, inflexible constraint as a proxy for understanding, and penalized children who couldn't perform within that constraint — regardless of whether they actually knew the material. This is what a timed test does. I built Brain Coins to be different from the classroom. The timer made it the same.

The mechanism: how a timer creates a grade floor

Brain Coins gives every problem the same time window regardless of difficulty. A single-digit addition problem and a three-digit subtraction problem get identical clocks. That design choice breaks everything.

Single-digit addition is pure recall. The cognitive path is direct: see the problem, retrieve the answer, enter it. Multi-digit subtraction requires regrouping, borrowing, multi-step processing — a fundamentally longer chain. Giving both problems the same window doesn't measure the same thing across difficulty levels. For simple problems, it measures recall speed. For hard problems, it measures whether the learner can compress a longer cognitive process into a window calibrated for a shorter one. That's not math ability. That's processing speed under constraint.

So children do what any rational agent would do: they find the difficulty level where the constraint doesn't punish them. They play at kindergarten level — not because they want easy problems, but because easy problems are the only ones where the game is measuring what they actually know rather than how fast they can think under pressure.

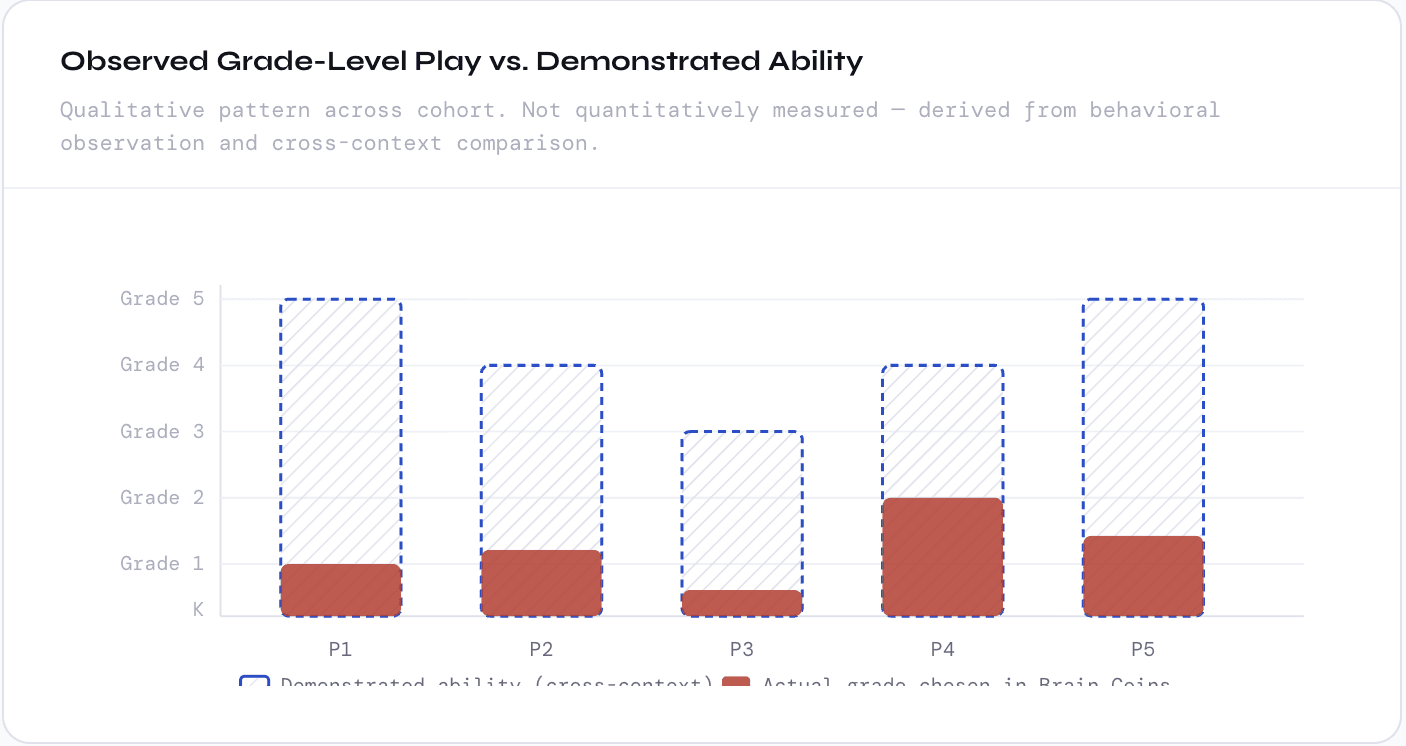

Demonstrated ability (cross-context)

Actual grade chosen in Brain Coins

The behavior pattern: what children do when a system doesn't trust them

Observed behaviorChild attempts grade-level problem

A child who performs at or above grade level in every other context selects a problem matching their actual ability inside Brain Coins.

Observed behaviorTimer expires before problem is solved

The cognitive steps required — regrouping, multi-step processing — take longer than the fixed window allows. The game marks it wrong. The child loses time and coins. This repeats on consecutive problems.

Emotional signalFrustration — specifically, injustice, not incapacity

The emotional response is consistent across the cohort. It's not quiet resignation or "I don't understand." It's active, specific protest.

"This isn't fair." — repeated across multiple participants, unprompted, in nearly identical language.

Children knew they were capable. The frustration was a trust failure — the system was telling them something false about themselves, and they knew it.

Strategic adaptationChild drops to a level where the timer isn't a constraint

After a streak of missed problems at grade level, children drop back to easy rounds — kindergarten, first grade — and stay there. Not because they want easy problems. Because easy problems are the only format where the game can't misrepresent their ability back to them.

Research outcomeGame logs a kindergarten-level player. The actual ability is invisible.

The system records failure. The child internalizes it if the environment has enough authority. The real ability — which would show up clearly in every other context — sits behind a design decision, untested and invisible. This is structurally identical to a timed standardized test.

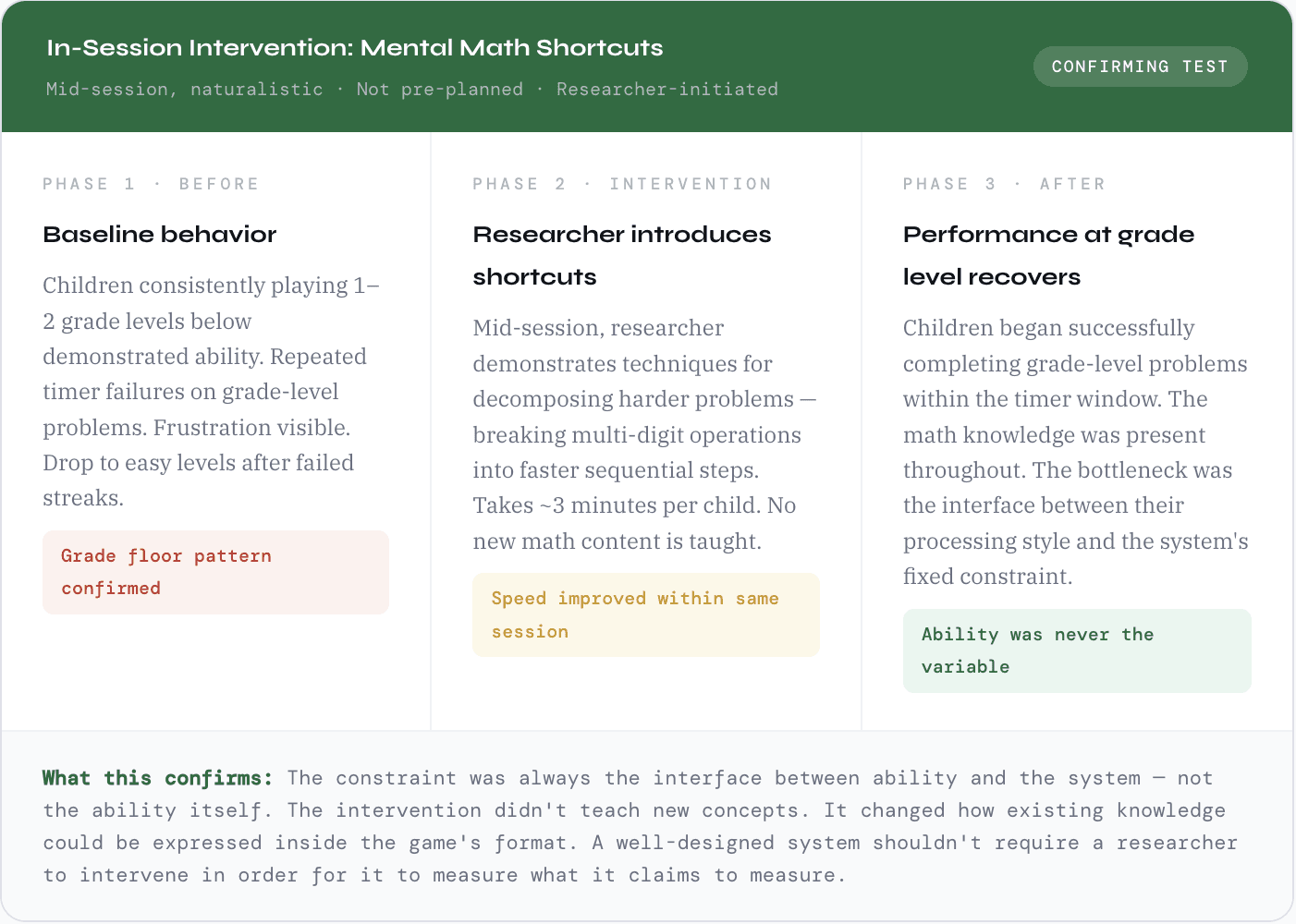

The shortcuts didn't teach them new math. They taught them how to translate what they already knew into a format the game could recognize. That's a design failure, not a learning failure.

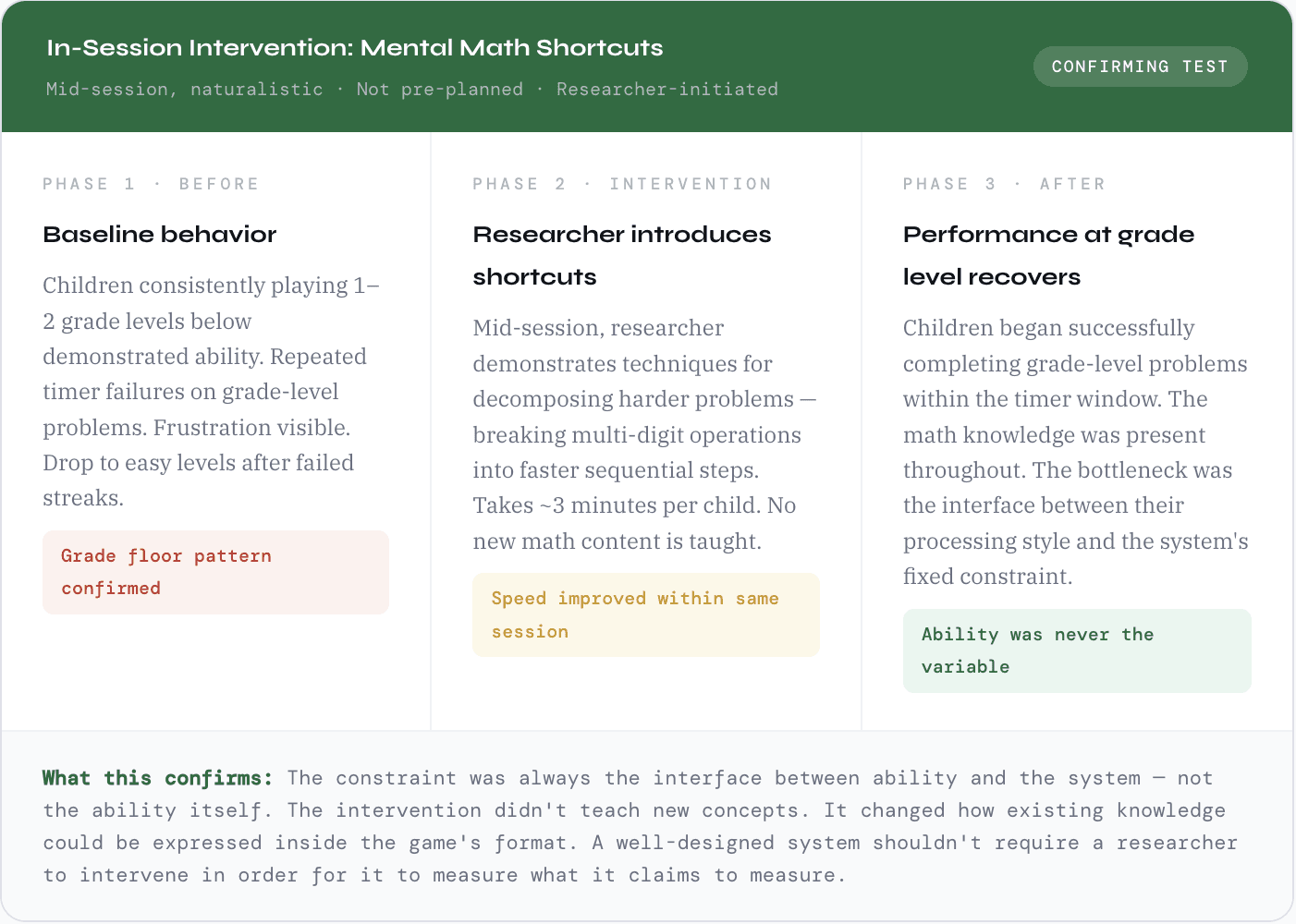

Research note, in-session intervention

The intervention that confirmed the diagnosis

Four mechanic redesigns — derived from the failure patterns

These aren't theoretical improvements. Each one maps directly to a specific observed failure. The goal isn't to remove pressure — kids like the urgency and it should stay. The goal is to make the timer serve the learner instead of gatekeeping them.

Complexity-Adjusted Timer

Planned

——Problem it solves

A fixed timer treats single-digit recall and multi-step subtraction as cognitively equivalent. They aren't. The timer was measuring speed, not ability.

Redesign

Timer window scales with problem complexity — calibrated to the actual cognitive steps required at each grade level. Grade 1 problems get a short window (they should be fast). Grade 4 problems get enough runway to actually think. Speed is still rewarded; complexity is no longer penalized.

Bonus Time as Streak Reward

Planned——

Problem it solves

Children who attempt difficult problems and miss consecutive answers experience a compounding pressure spiral — less time, more stress, more misses — which drives the retreat to easy levels.

Redesign

Solving a streak correctly earns bonus seconds on the next problem. Keeps the arcade pressure alive but gives a pressure valve. Speed is still rewarded — it now compounds into breathing room rather than just points. A child attempting hard problems who misses once doesn't immediately lose everything.

Pre-Round Strategy Hint

Testing——

Problem it solves

The intervention worked — but required a researcher to be present. Shortcuts shouldn't need a human intermediary. They should be part of the game's design.

Redesign

A quick strategy tip appears before each round at harder difficulty levels — how to decompose the problem type, what to look for, a faster processing path. Coaching is built into the product as a game mechanic, not added as remediation. The child isn't told to "try harder." They're given a better tool.

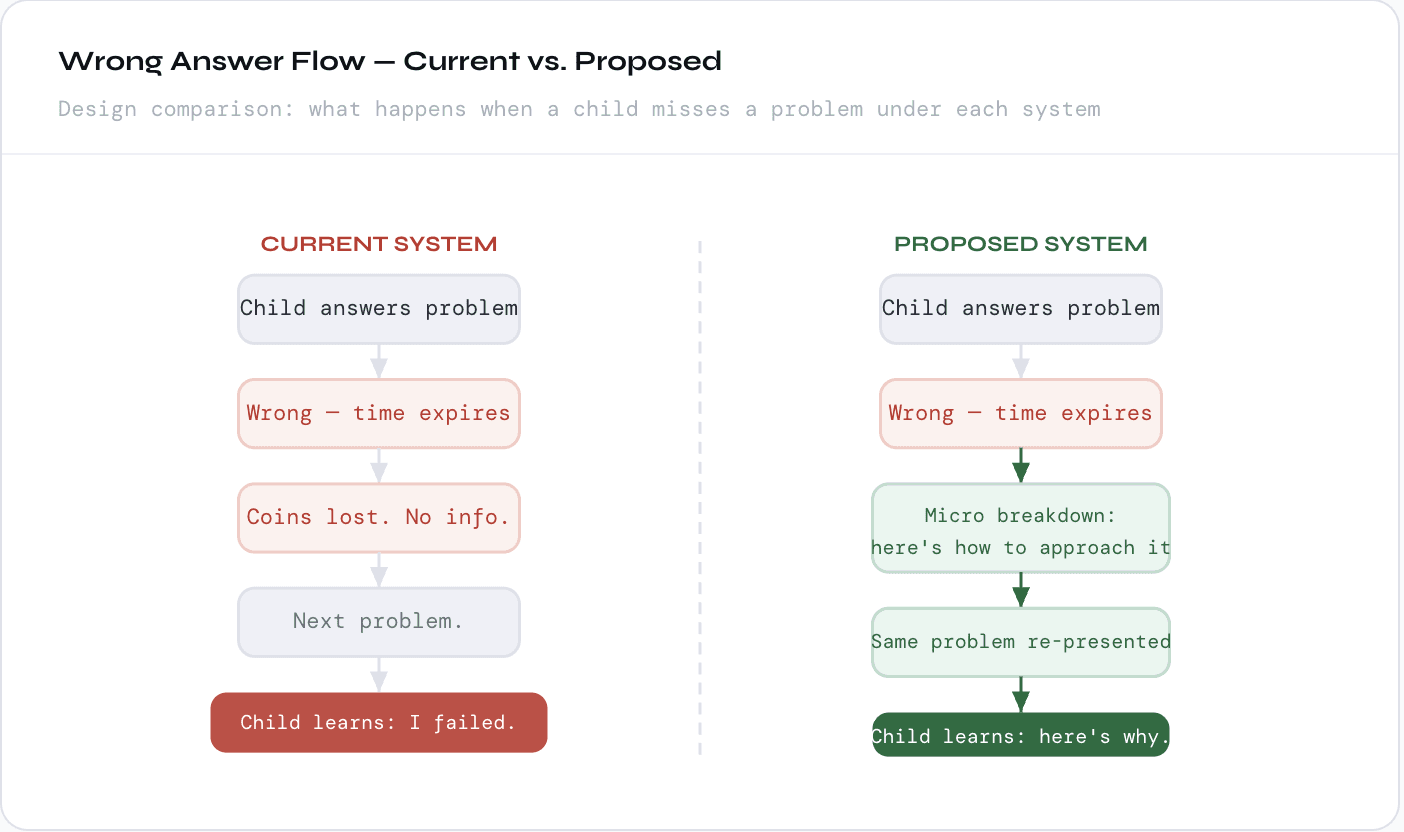

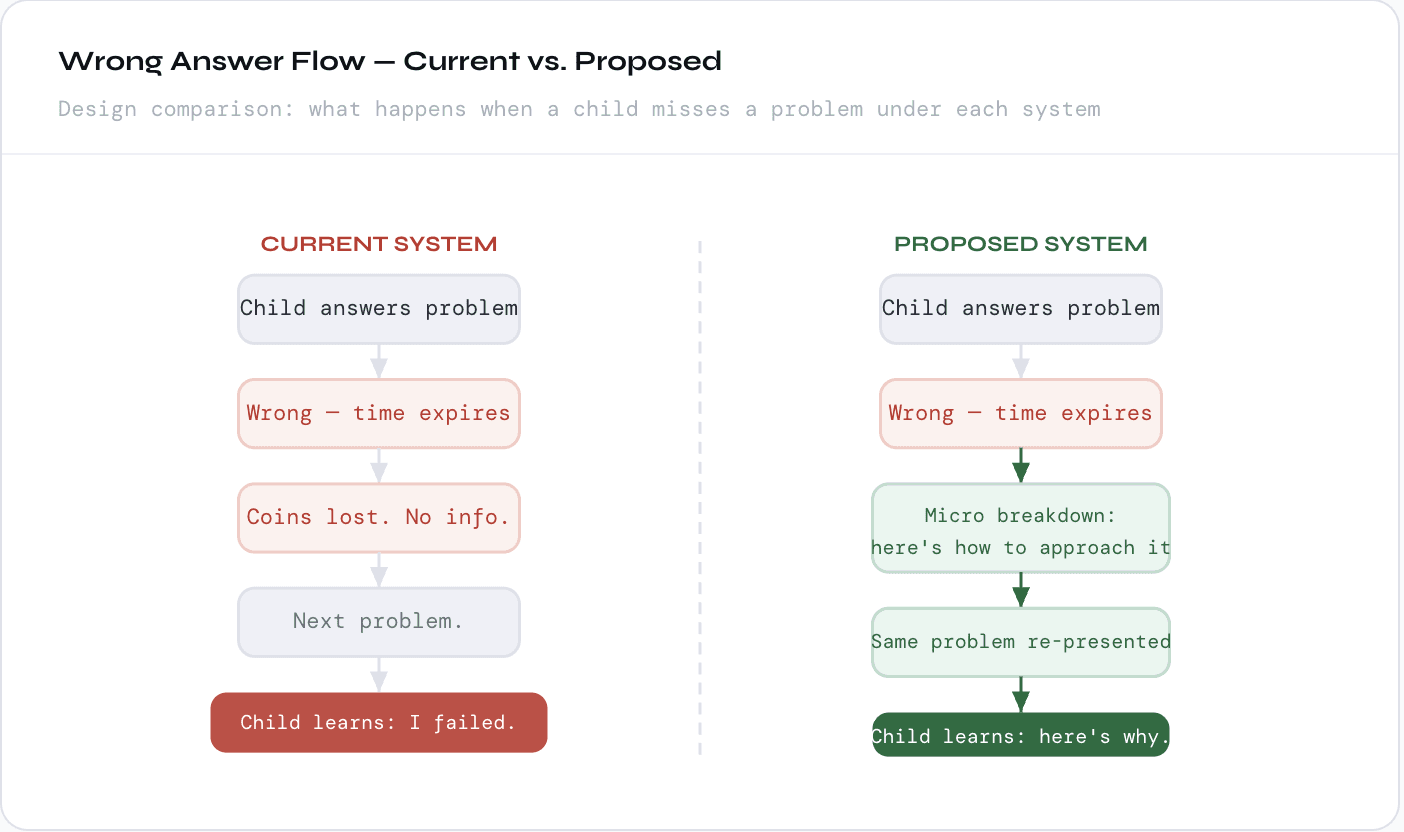

Wrong Answers That Branch, Not Dead-End

Planned——

Problem it solves

Currently, a wrong answer costs time and coins — and nothing else. There's no information. No path forward. The child learns they failed; they don't learn why or how to recover. This turns mistakes into dead ends rather than data.

Redesign

A missed problem branches into a micro-breakdown: what the correct approach is, where the process went, and the same problem re-presented. The child still wants to get it right — coins are on the line. But the miss becomes a learning moment instead of a penalty. Mistakes become the mechanism for understanding rather than evidence of failure.

On methodology: This study was conducted without experimental controls. The grade-drop pattern was identified through behavioral observation and cross-context comparison, not quantitative measurement. The chart in this article is a qualitative model, not plotted data. The in-session intervention was improvised, not pre-planned. All participants anonymized. The researcher's explicit self-critical posture is intentional and considered part of the methodological record.

Try the product: Brain Coins is live at brain-coins.lovable.app. The mechanics described as "planned" above are not yet implemented in the current version — this article documents the research case for changing them.

Theoretical grounding: Bjork, R.A. (1994). Memory and metamemory considerations in the training of human beings — on desirable difficulties. Dweck, C.S. (2006). Mindset — on fixed vs. growth framing of ability. Gee, J.P. (2003). What Video Games Have to Teach Us About Learning — on game design as learning environment design.

Name/Title | Method | Product |

|---|---|---|

Miyya Cody Full Stack Product Designer | Prototype usability testing, behavioral observation, in-session intervention | Brain Coins (researcher-built math game) · Cohort: multiple children, repeated sessions |

I built a math game for kids. Kids capable of above-grade-level math started playing it two grade levels below where they actually are. That's not a learner problem. I designed the flaw. Here's what I found when I looked at my own product honestly.

What I observed and why it matters

Brain Coins is an arcade-style math game I built: solve problems, earn coins, level up. Fast, pressured, immediately satisfying. In many ways it works. Kids like it. They come back to it.

But several of the children I've been studying across this research — kids I've watched build math problems above their grade level for fun, solve multiplication on a playground without pausing, voluntarily extend problems by calculating comparative ratios — are playing Brain Coins at kindergarten level. First-grade level. One to two full years below where they demonstrably perform in every other context.

I spent time trying to explain this as a context effect, an age-appropriate preference for easy wins, a different kind of engagement. None of those explanations held. The pattern was too consistent and the emotional signal too specific.

Self-Critique

The correct framing, once I was honest about it: I built a game that replicates one of the worst patterns of traditional education. I used a fixed, inflexible constraint as a proxy for understanding, and penalized children who couldn't perform within that constraint — regardless of whether they actually knew the material. This is what a timed test does. I built Brain Coins to be different from the classroom. The timer made it the same.

The mechanism: how a timer creates a grade floor

Brain Coins gives every problem the same time window regardless of difficulty. A single-digit addition problem and a three-digit subtraction problem get identical clocks. That design choice breaks everything.

Single-digit addition is pure recall. The cognitive path is direct: see the problem, retrieve the answer, enter it. Multi-digit subtraction requires regrouping, borrowing, multi-step processing — a fundamentally longer chain. Giving both problems the same window doesn't measure the same thing across difficulty levels. For simple problems, it measures recall speed. For hard problems, it measures whether the learner can compress a longer cognitive process into a window calibrated for a shorter one. That's not math ability. That's processing speed under constraint.

So children do what any rational agent would do: they find the difficulty level where the constraint doesn't punish them. They play at kindergarten level — not because they want easy problems, but because easy problems are the only ones where the game is measuring what they actually know rather than how fast they can think under pressure.

Demonstrated ability (cross-context)

Actual grade chosen in Brain Coins

The behavior pattern: what children do when a system doesn't trust them

Observed behaviorChild attempts grade-level problem

A child who performs at or above grade level in every other context selects a problem matching their actual ability inside Brain Coins.

Observed behaviorTimer expires before problem is solved

The cognitive steps required — regrouping, multi-step processing — take longer than the fixed window allows. The game marks it wrong. The child loses time and coins. This repeats on consecutive problems.

Emotional signalFrustration — specifically, injustice, not incapacity

The emotional response is consistent across the cohort. It's not quiet resignation or "I don't understand." It's active, specific protest.

"This isn't fair." — repeated across multiple participants, unprompted, in nearly identical language.

Children knew they were capable. The frustration was a trust failure — the system was telling them something false about themselves, and they knew it.

Strategic adaptationChild drops to a level where the timer isn't a constraint

After a streak of missed problems at grade level, children drop back to easy rounds — kindergarten, first grade — and stay there. Not because they want easy problems. Because easy problems are the only format where the game can't misrepresent their ability back to them.

Research outcomeGame logs a kindergarten-level player. The actual ability is invisible.

The system records failure. The child internalizes it if the environment has enough authority. The real ability — which would show up clearly in every other context — sits behind a design decision, untested and invisible. This is structurally identical to a timed standardized test.

The shortcuts didn't teach them new math. They taught them how to translate what they already knew into a format the game could recognize. That's a design failure, not a learning failure.

Research note, in-session intervention

The intervention that confirmed the diagnosis

Four mechanic redesigns — derived from the failure patterns

These aren't theoretical improvements. Each one maps directly to a specific observed failure. The goal isn't to remove pressure — kids like the urgency and it should stay. The goal is to make the timer serve the learner instead of gatekeeping them.

Complexity-Adjusted Timer

Planned

——Problem it solves

A fixed timer treats single-digit recall and multi-step subtraction as cognitively equivalent. They aren't. The timer was measuring speed, not ability.

Redesign

Timer window scales with problem complexity — calibrated to the actual cognitive steps required at each grade level. Grade 1 problems get a short window (they should be fast). Grade 4 problems get enough runway to actually think. Speed is still rewarded; complexity is no longer penalized.

Bonus Time as Streak Reward

Planned——

Problem it solves

Children who attempt difficult problems and miss consecutive answers experience a compounding pressure spiral — less time, more stress, more misses — which drives the retreat to easy levels.

Redesign

Solving a streak correctly earns bonus seconds on the next problem. Keeps the arcade pressure alive but gives a pressure valve. Speed is still rewarded — it now compounds into breathing room rather than just points. A child attempting hard problems who misses once doesn't immediately lose everything.

Pre-Round Strategy Hint

Testing——

Problem it solves

The intervention worked — but required a researcher to be present. Shortcuts shouldn't need a human intermediary. They should be part of the game's design.

Redesign

A quick strategy tip appears before each round at harder difficulty levels — how to decompose the problem type, what to look for, a faster processing path. Coaching is built into the product as a game mechanic, not added as remediation. The child isn't told to "try harder." They're given a better tool.

Wrong Answers That Branch, Not Dead-End

Planned——

Problem it solves

Currently, a wrong answer costs time and coins — and nothing else. There's no information. No path forward. The child learns they failed; they don't learn why or how to recover. This turns mistakes into dead ends rather than data.

Redesign

A missed problem branches into a micro-breakdown: what the correct approach is, where the process went, and the same problem re-presented. The child still wants to get it right — coins are on the line. But the miss becomes a learning moment instead of a penalty. Mistakes become the mechanism for understanding rather than evidence of failure.

On methodology: This study was conducted without experimental controls. The grade-drop pattern was identified through behavioral observation and cross-context comparison, not quantitative measurement. The chart in this article is a qualitative model, not plotted data. The in-session intervention was improvised, not pre-planned. All participants anonymized. The researcher's explicit self-critical posture is intentional and considered part of the methodological record.

Try the product: Brain Coins is live at brain-coins.lovable.app. The mechanics described as "planned" above are not yet implemented in the current version — this article documents the research case for changing them.

Theoretical grounding: Bjork, R.A. (1994). Memory and metamemory considerations in the training of human beings — on desirable difficulties. Dweck, C.S. (2006). Mindset — on fixed vs. growth framing of ability. Gee, J.P. (2003). What Video Games Have to Teach Us About Learning — on game design as learning environment design.